The “New Era of Software Engineering” is a three-part series exploring how AI is redefining software delivery. As code becomes automated, the real challenge shifts to clearly defining the right intent. We introduce the role of the Intent Engineer, share our Intent Engineering methodology, and provide hands-on insights from SQUER’s first dedicated Intent Engineer — outlining how engineering intent becomes the key capability in the age of AI.

At SQUER, we've spent the last years building software side by side with our customers. Mostly in financial services, mostly in complex domains, mostly under regulatory pressure. We've shipped with whole teams, but mainly deployed Impact Pairs (a Coding Architect and a Software Engineer) to work alongside with our customer engineering departments to enable their teams and work with them hands-on, on daily basis.

That model has proven its value: over 100 SQUERies working with our clients, and an 87% retention rate, speak for themselves.

But something fundamental has changed. And we'd be lying to ourselves if we pretended it hasn't.

The shift nobody prepared us for

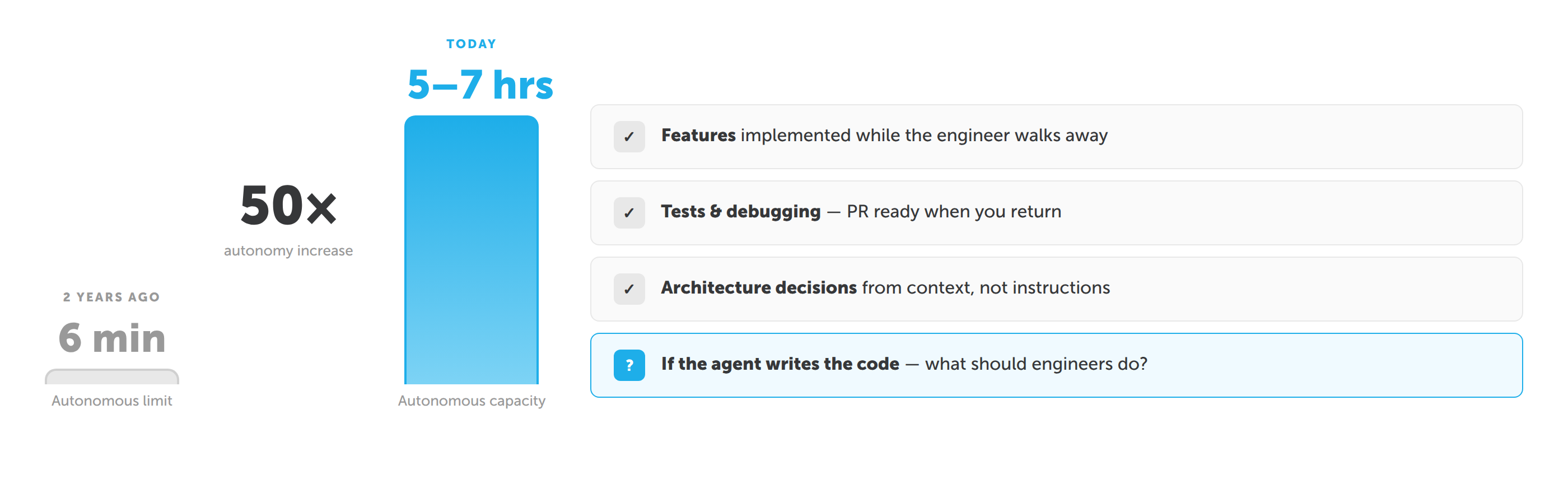

Two years ago, the best AI coding models could work autonomously for about six minutes. Today, agents like Claude Code work autonomously for five to seven hours, implementing features, writing tests, debugging, creating pull requests. That's not a marginal improvement. That's a different category.

When we first experimented with Claude Code, the results were underwhelming. The agents struggled with context, broke tests, and often produced more cleanup work than value.

But starting in early 2026, something changed. Iteration after iteration, the systems became dramatically more reliable. Today, an engineer can describe a feature, point the agent at the codebase, step away and and come back to a working implementation, green tests, and a ready-to-review PR.

The natural question was: if the agent is writing the code, what should the engineer be doing?

The problem isn't code. It's intent.

Here's what we observed across dozens of customer projects: the biggest challenge was never the coding itself. It was the back-and-forth between the business department and the development team. Requirements that described solutions instead of problems. User stories that were technically precise but business-vague. Acceptance criteria that tested implementation details instead of outcomes.

AI agents amplify this problem dramatically. A human developer can compensate for a vague requirement, they'll walk over to the product owner's desk and ask. An AI agent can't. Give it an ambiguous instruction, and it will confidently build exactly the wrong thing.

We realised that the critical skill in AI-assisted software development is not writing code. It's defining the intent clearly enough that an AI agent can execute it without ambiguity.

That's a fundamentally different job than what a Coding Architect or a Requirements Engineer does today.

Enter the Intent Engineer

So we created a new role: the Intent Engineer.

The Intent Engineer lives at the business department. Not in IT. They speak the language of the domain, insurance claims, payment processing, regulatory compliance, not the language of frameworks and APIs.

Their job is to extract the intent behind what stakeholders want, enrich it with the domain context that AI agents need to execute well, and continuously validate that the outcomes match the original business need.

This is not a rebrand of the Requirements Engineer. And it's not a Product Owner with a new title. The difference is fundamental, and it becomes visible the moment you try to hand traditional artefacts to an AI agent.

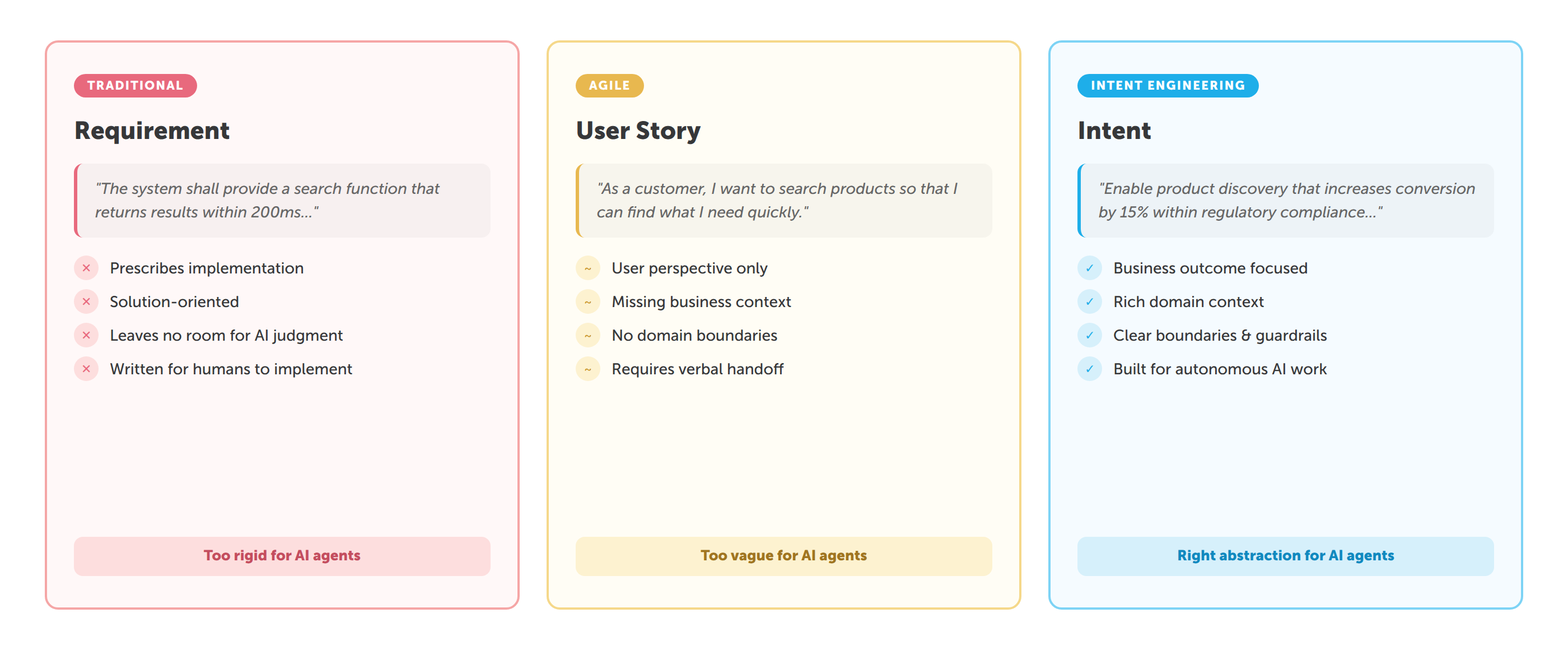

Requirements tell the agent what to build. "Build a REST endpoint that accepts claim submissions and validates mandatory fields against a JSON schema." The solution is already decided. The agent becomes a typist, translating a predetermined design into code. If the chosen design isn't optimal, the agent will faithfully implement the suboptimal version. Worse: the agent can't push back on a bad architecture. It doesn't know why this endpoint exists.

User Stories tell the agent who wants something. "As a claims adjuster, I want to see a list of missing fields so that I can request them from the customer." Better, there's a user and a goal. But user stories are deliberately thin. They assume a conversation will fill in the gaps. That works when a human developer can walk over to the product owner's desk and ask "What counts as a complete claim in property insurance versus auto insurance?" An AI agent can't have that hallway conversation. It will either hallucinate the answer or produce something generic.

Intent tells the agent why something matters and gives it the context to figure out the rest. "Incoming claims must be checked for completeness before a coverage review can begin, because 38% of claims currently arrive incomplete, causing an average 2.4-day delay per follow-up cycle, 1.3 rounds of back-and-forth per case, and 18 minutes of adjuster time per follow-up. A complete claim in auto insurance requires police report number and vehicle registration; in property insurance it requires damage photos and building year."

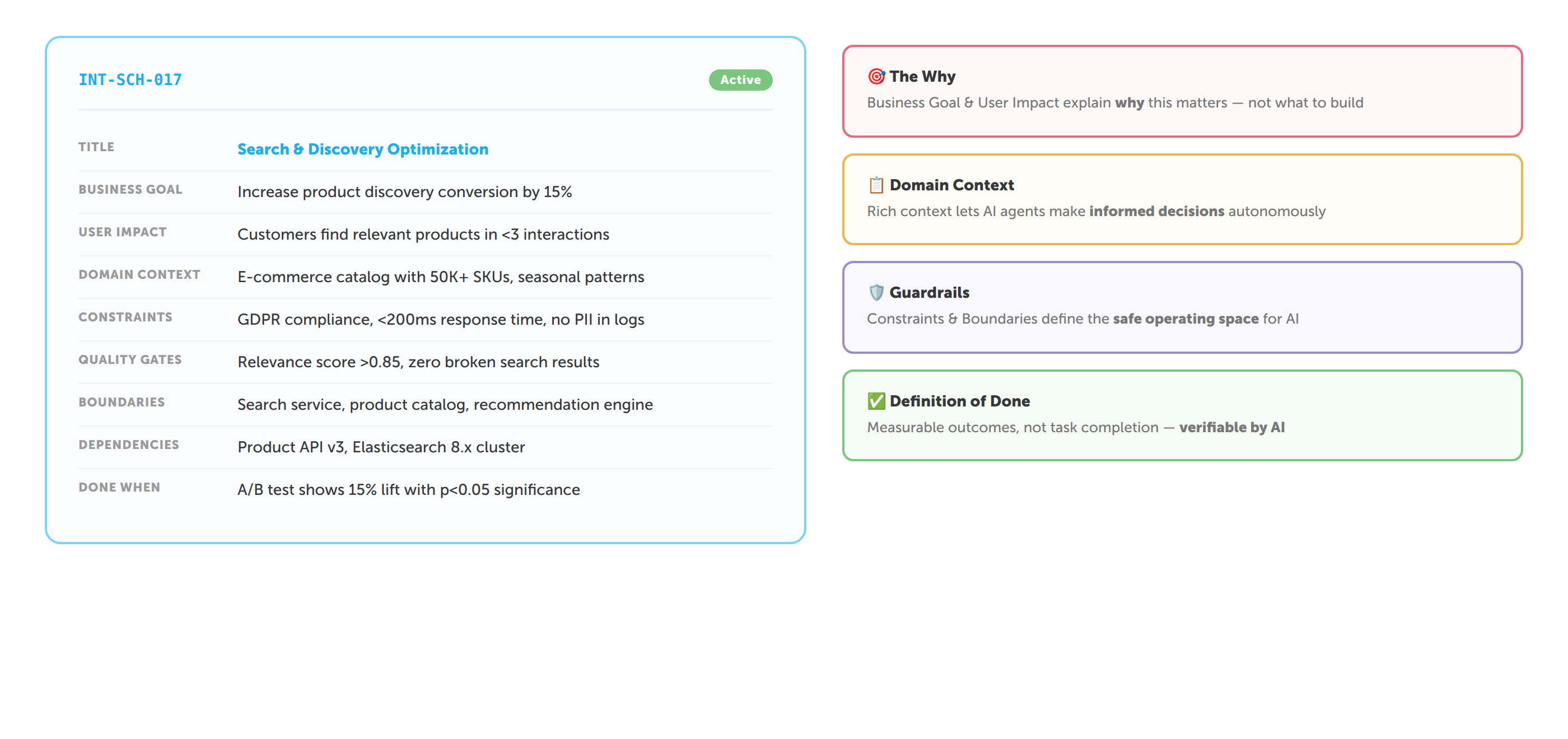

Notice what changed. The agent now understands the business pain (2.4-day delay), the scale of the problem (38%), the domain rules (different completeness criteria per insurance line), and the success condition (completeness before coverage review). It has enough context to make intelligent implementation decisions, choosing the right data model, designing the validation logic, even suggesting optimisations the human hadn't considered.

This is the core insight: AI agents don't need more precise instructions. They need more context and more freedom. Requirements over-constrain the solution space. User stories under-specify the problem space. Intent hits the sweet spot, it's precise about the what and why, but deliberately open about the how.

We've seen this play out consistently in practice. When we hand an AI agent a well-written intent with rich domain context, the quality of the output is dramatically better than when we hand it a set of user stories, even if the user stories are well-written by conventional standards. The agent leverages the business context to make hundreds of micro-decisions that would otherwise require human intervention. Field validation order, error message wording, edge case handling, API design, decisions that previously generated Slack threads and meeting invitations now happen autonomously, and they happen correctly, because the agent understands the underlying purpose.

What Intent Engineers actually do

We've developed a structured methodology around intent capture. At its core, an Intent is a document with eleven fields: business context, current state, target state, success criteria, constraints, domain knowledge, stakeholders, dependencies, and priority.

The "current state" is where most of the value lives. When an Intent Engineer spends a morning shadowing a claims adjuster and discovers that 38% of incoming claims are incomplete, causing an average 2.4-day delay per follow-up cycle, that's the kind of context that makes an AI agent dangerously effective.

We've found that the richer the context, the better the AI output. Not more instructions. More context. There's a meaningful difference.

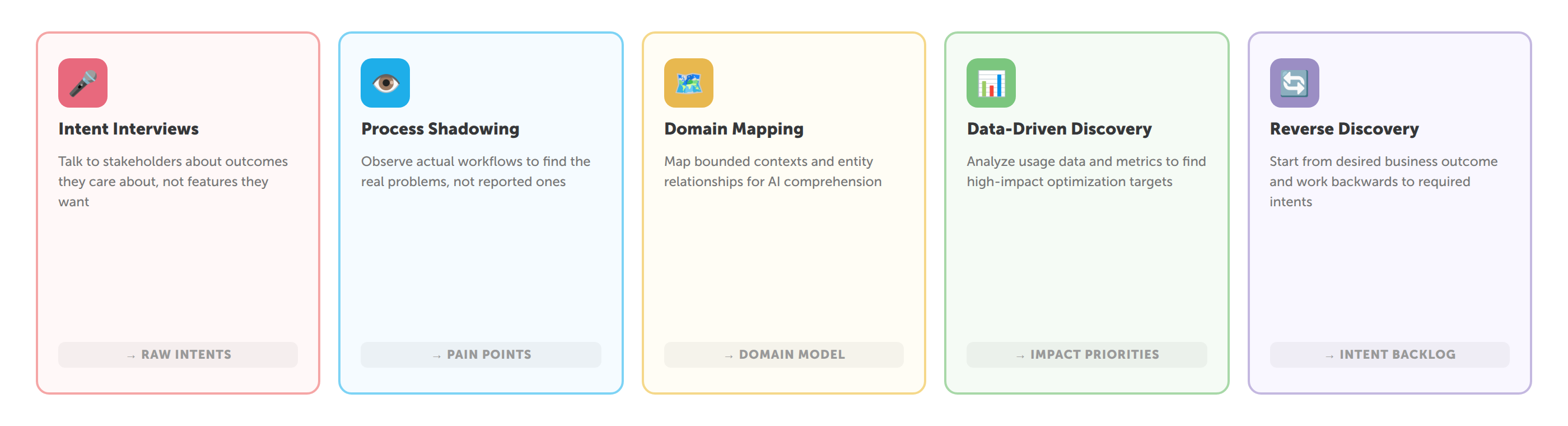

Intent Engineers use five core discovery methods: intent interviews (structured conversations with seven standard questions), process shadowing (observing daily work to find workarounds and friction), domain mapping (event storming, domain storytelling), data-driven discovery (analysing error rates, throughput times, support ticket patterns), and reverse discovery (clustering an existing 47-story backlog into five focused intents).

The methodology is documented in detail in our internal Intent Engineering Playbook, which we plan to make publicly available.

The other half: the Systems Engineer

If the Intent Engineer owns the what and why, someone needs to own the how. That's the Systems Engineer, the second role in what we call a Focused Impact Pair.

The Systems Engineer doesn't write code. They orchestrate AI agents that write code. They make architecture decisions, configure quality gates, manage the CI/CD pipeline, and review agent output. Think of them as a conductor rather than a musician.

A typical day: the Intent Engineer delivers a prioritised intent with business context and measurable success criteria. The Systems Engineer opens the AI Development Platform (a governed environment that orchestrates AI agents, enforces quality gates, and maintains a full audit trail). We've built one at SQUER that we use ourselves and provide to our customers. The engineer points the platform at the intent and the existing codebase. The agent analyses the architecture, proposes an implementation plan. The engineer reviews and adjusts. The agent implements autonomously, writing code, tests, fixing errors, iterating. The Systems Engineer monitors and intervenes only for architectural decisions.

By end of day, the Intent Engineer demos the result to the business department. Feedback flows into the next intent.

Two people. AI agents. No Scrum Master, no dedicated QA, no separate DevOps team. The overhead decreases because the agents handle the volume, and the two humans handle the judgment.

From Intent to Spec to Code

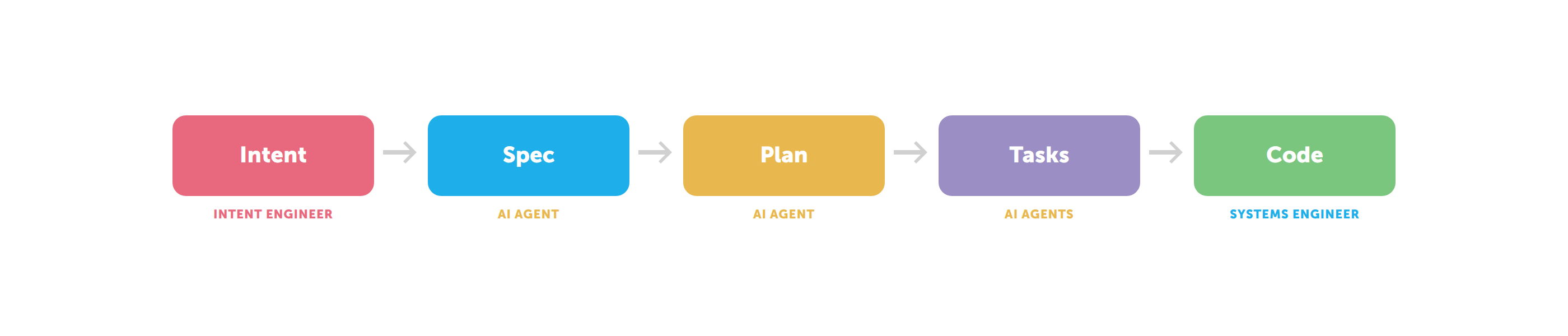

One thing we've been exploring is how intents connect to the broader developer tooling ecosystem and professional enterprise Software Development. Specifically, we've been looking at GitHub's spec-kit for spec-driven development.

The mapping turns out to be remarkably clean:

The intent (written by the Intent Engineer) captures the what and why. The spec (co-created with the Systems Engineer) translates this into testable user stories, functional requirements, and a domain model. From there, spec-kit's workflow takes over, plan, tasks, implement, all driven by AI agents.

Intent becomes the upstream artifact that feeds spec-driven development. They're not competing concepts; they're complementary layers. Intent is strategy. Spec is tactics. Code is execution.

Why this matters now

We're not the first to talk about AI changing software development. Everyone is. But most of the conversation focuses on the tools, which model is fastest, which IDE has the best integration, how many lines of code the agent can generate per hour.

That's the wrong conversation.

The right conversation is about roles and organisational design. When code generation becomes cheap and fast, the bottleneck shifts upstream, to understanding problems, capturing domain knowledge, defining success criteria. The organisations that figure this out first will move at a fundamentally different speed.

We believe the Intent Engineer is the missing role in AI-assisted software delivery. Not prompt engineering (that's a technique, not a role). Not product management (that's strategy, not delivery). The Intent Engineer sits precisely at the interface between business need and technical execution, the exact spot where AI agents need the most help.

What we're building at SQUER

The Intent Engineer is one piece of a larger puzzle we're calling The New Era of Software Engineering. It includes:

- The Focused Impact Pair model (Intent Engineer + Systems Engineer + AI agents) as a replacement for traditional delivery teams

- A SQUER AI Engineering Platform, a platform that deploys into the customer's infrastructure with governance, compliance (EU AI Act, DORA, MaRisk), quality gates, and full audit trail

- An Intent Engineering Playbook documenting the methodology, patterns, tools, and anti-patterns

- Two offerings: DELIVER (we send the Impact Pair) and ENABLE (we help your organisation build its own)

We've been developing this model since 2025. We use it internally. We're piloting it with customers.

What's next

Every major shift in software engineering has been about removing a bottleneck. Agile removed the communication bottleneck between dev and business. DevOps removed the deployment bottleneck between dev and ops.

The next bottleneck to remove is the gap between business intent and technical execution. That's what the Intent Engineer does.

Spotify didn't trademark the Squad Model. Basecamp didn't trademark Shape Up. They defined the frameworks, published them, and the industry adopted the terminology. We believe the same will happen with Intent Engineering. We're publishing our methodology openly because we think the industry needs it and because we'd rather lead the conversation than react to it.

If you're a CTO or engineering leader in financial services thinking about what AI agents mean for your delivery organisation, we should talk. Not about tools. About roles.

PS: If you'd rather explore this on your own first, keep an eye on this blog. The Intent Engineering Playbook will be published here. And if you'd like to see a Focused Impact Pair in action before committing to anything: that's exactly what our DELIVER offering is for. 👋